lodion

14h ago

•

100%

lodion

14h ago

•

100%

Nope, I'm a fool. SES is in sandpit mode, it won't send to anyone but my identities. Rolling back until AWS move me to production :(

After some users have had issues recently, I've finally gotten around to putting in place a better solution for outbound email from this instance. It now sends out via Amazon SES, rather than directly from our OVH VPS. The result is emails should actually get to more people now, rather than being blocked by over-enthusiastic spam filters... looking at you Outlook and Gmail.

lodion

3w ago

•

85%

lodion

3w ago

•

85%

Usually I'd lean towards removing an editorialized title such as this, but under the circumstances I'm not confident the title isn't 100% correct.

lodion

4w ago

•

100%

lodion

4w ago

•

100%

Your office sounds awesome.

lodion

1mo ago

•

100%

lodion

1mo ago

•

100%

I suspect there are updates to pict-rs that may help. Will check tonight.

lodion

1mo ago

•

100%

lodion

1mo ago

•

100%

Money isn't the issue, it's time. I'm time poor, simply don't have time to set it up.

lodion

1mo ago

•

100%

lodion

1mo ago

•

100%

When 0.19.6 is out of beta I'll upgrade, then we'll need to wait for LW and others to upgrade.

lodion

2mo ago

•

100%

lodion

2mo ago

•

100%

MinRes aren't in the CBD, they're out in Osborne Park.

lodion

2mo ago

•

100%

lodion

2mo ago

•

100%

That's fine.

lodion

2mo ago

•

100%

lodion

2mo ago

•

100%

Sorry not interested in any hooks into Reddit, or additional software requiring ongoing management.

lodion

2mo ago

•

100%

lodion

2mo ago

•

100%

Plain old reboot. When 0.19.16 is out of beta I'll upgrade to it. We have enough issues without running pre release versions.

lodion

3mo ago

•

100%

lodion

3mo ago

•

100%

The server is overdue for a reboot, I'll do it late tonight before I go to bed.. if I remember 😁

lodion

3mo ago

•

100%

lodion

3mo ago

•

100%

Seconded.

I agree wholeheartedly.

lodion

3mo ago

•

100%

lodion

3mo ago

•

100%

Cloudfront? No. Cloudflare? Yes 😀

Edit: affecting AZ I mean.

lodion

3mo ago

•

100%

lodion

3mo ago

•

100%

The native lemmy web interface works from mobile devices pretty well.

lodion

4mo ago

•

100%

lodion

4mo ago

•

100%

I'm happy for someone to run one, but don't have time to run it myself.

lodion

4mo ago

•

100%

lodion

4mo ago

•

100%

I tried to get on the 35 recently while in Melbourne. Right from the start of the loop at Waterfront City. It didn't show for 3 scheduled departures. I phone the information number to ask if it was running, "yes, it will be there in 5 minutes"... "are you sure, its not been here the last two scheduled times?".." yes absolutely it will be there". Surprise, it didn't show.

I don't mind things not running, life happens... but don't feed bad info to visitors.

lodion

4mo ago

•

100%

lodion

4mo ago

•

100%

I've just moved to a Boost annual prepaid plan. I was paying Belong $15 per month, but they're about to bump the price to $21 per month... making it slightly more than the Boost option, but only on the wholesale network. Cheaper and better coverage? Sign me up!

lodion

4mo ago

•

100%

lodion

4mo ago

•

100%

Hey Dave, yeah I know about the issues with high latency federation. Have been in touch with Nothing4You, but not discussed the batching solution.

Yes losing LW content isn't great... but I don't really have the time to invest to implement a work around. I'm confident the lemmy devs will have a solution at some point... hopefully when they do the LW admins upgrade pronto 🙂

lodion

4mo ago

•

100%

lodion

4mo ago

•

100%

The biggest gotcha with 0.19.4, is the required upgrades to postgres and pictrs. Postgres requires a full DB dump, delete, recreate. Pict-rs requires its own backend DB migration, which can take quite a bit of time.

Hey all, following the work over the weekend we're now running Lemmy 0.19.4. please post any comments, questions, feedback or issues in this thread. One of the major features added has been the ability to proxy third party images, which I've enabled. I'll be keeping a closer eye on our server utilisation to see how this goes...

This weekend I'll be working to upgrade AZ to lemmy [0.19.4](https://join-lemmy.org/news/2024-06-07_-_Lemmy_Release_v0.19.4_-_Image_Proxying_and_Federation_improvements), which requires changes to some other back end supporting systems. Expect occasional errors/slowdowns, broken images etc. Once complete, I'll be making further changes to enable/tweak some of the new features. UPDATE: one of the back end component upgrades requires dumping and reimporting the entire lemmy database. This will require ~1 hour of total downtime for the site. I expect this to kick off tonight ~9pm Perth time. UPDATE2: DB dump/re-import going to happen ~6pm Perth time, ie about 10 minutes from this edit. UPDATGE3: we're back after the postgres upgrade. Next will be a brief outage for the lemmy upgrade itself... after I've had dinner 🙂 UPDATE34: We're on lemmy 0.19.4 now. I'll be looking at new features/settings and playing around with them.

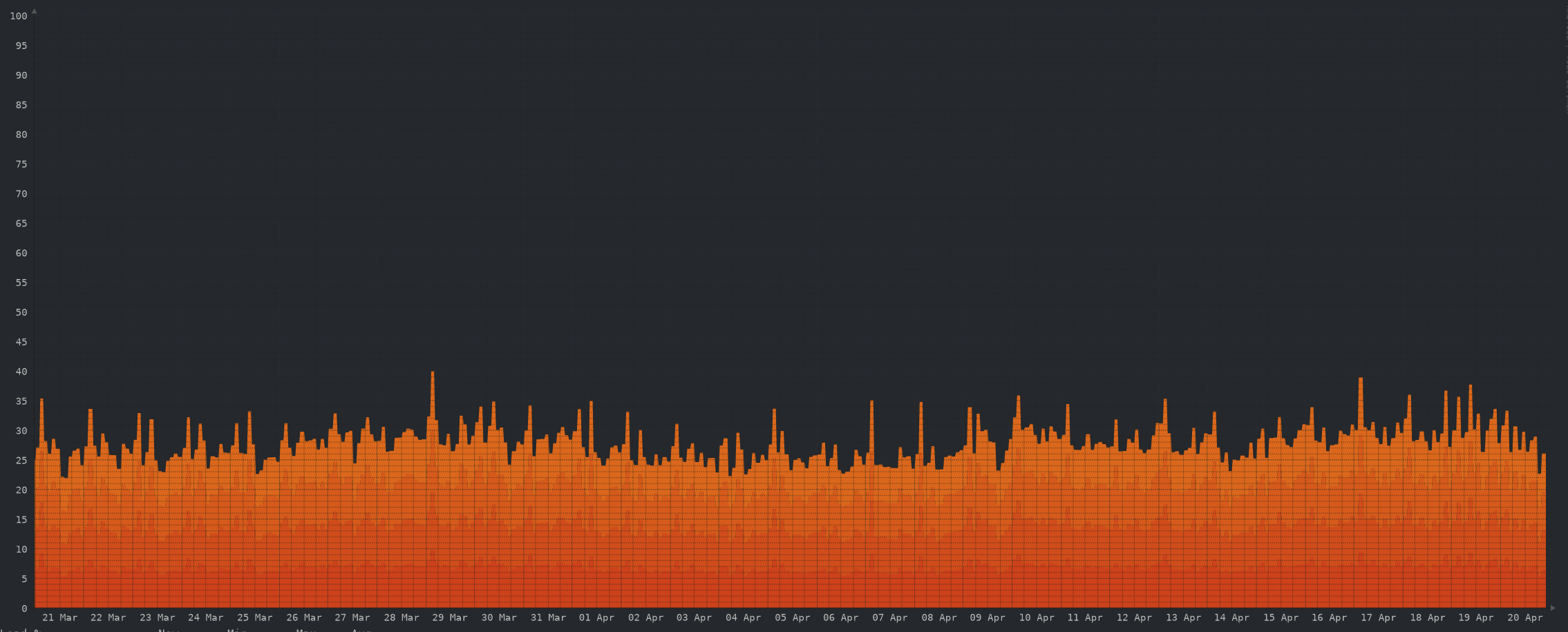

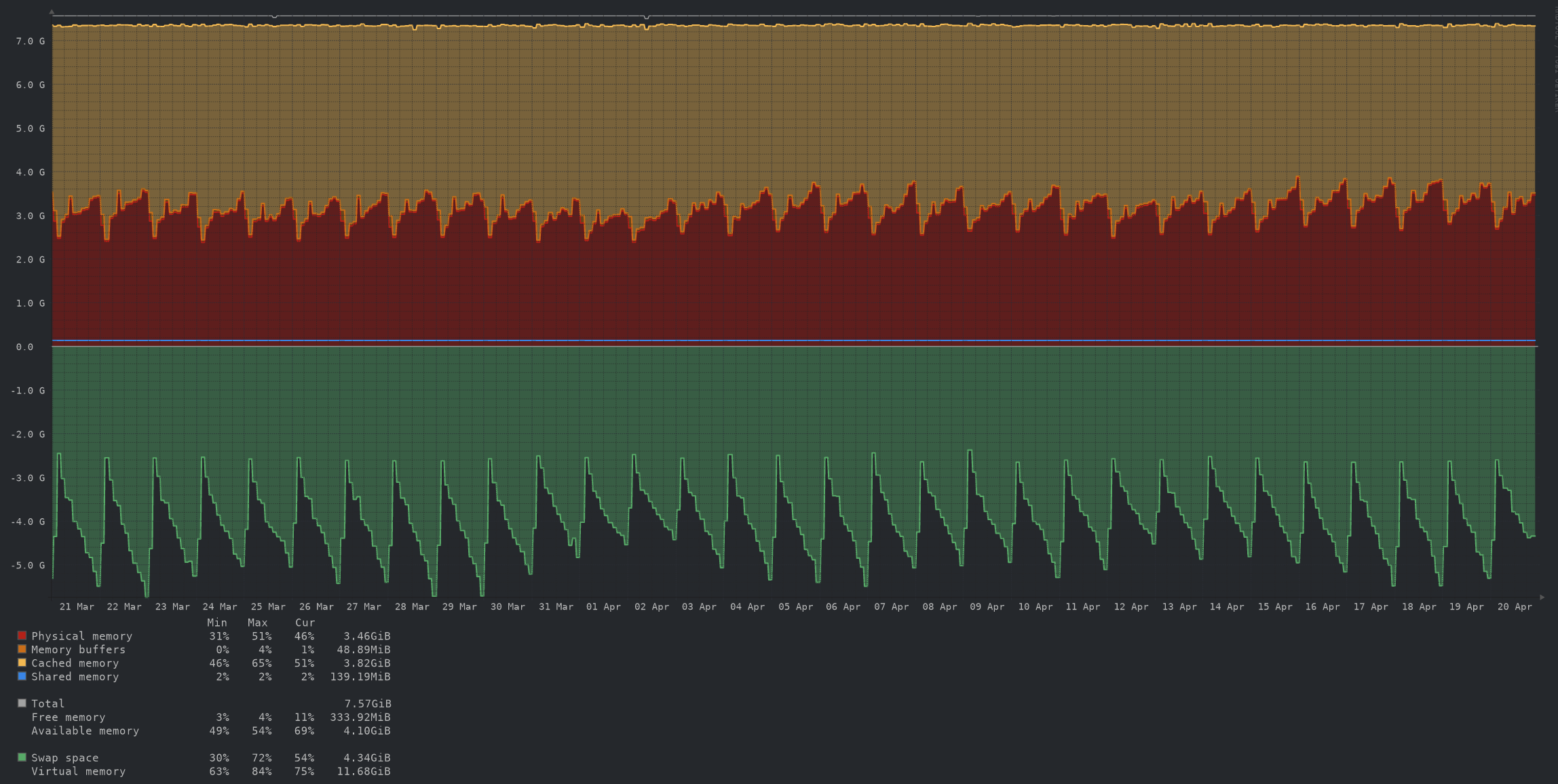

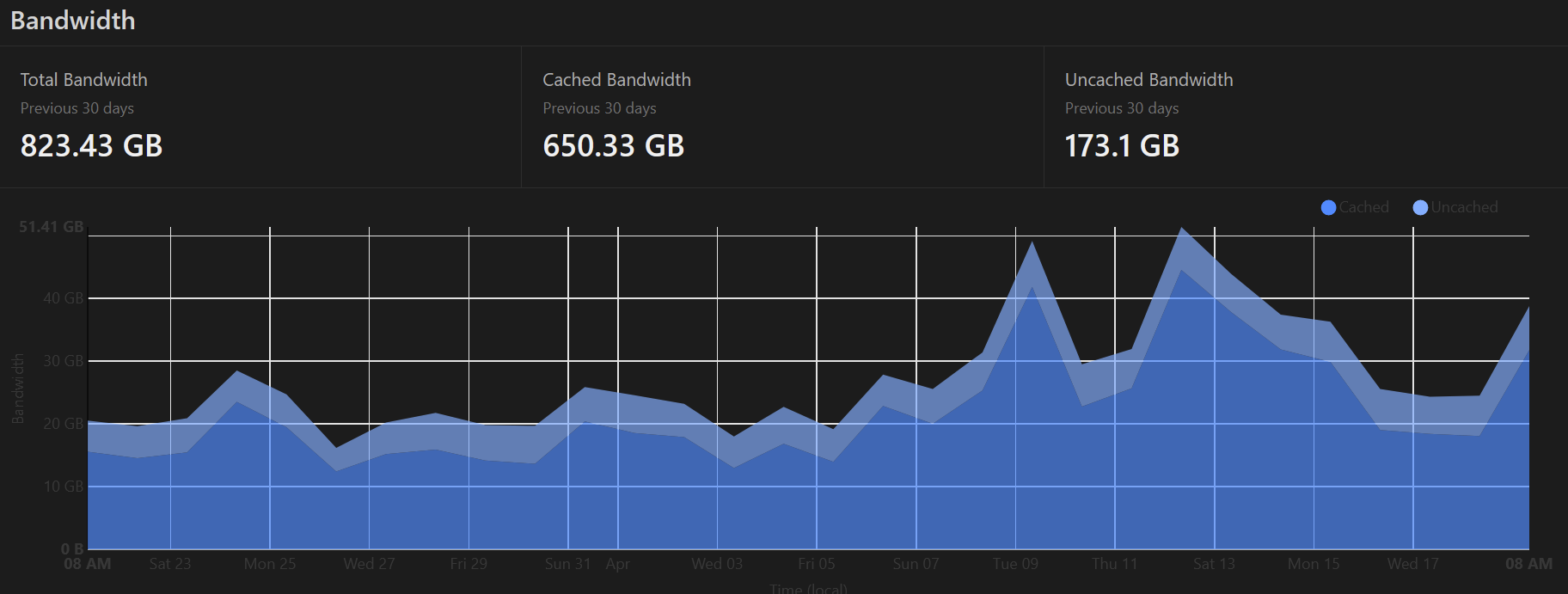

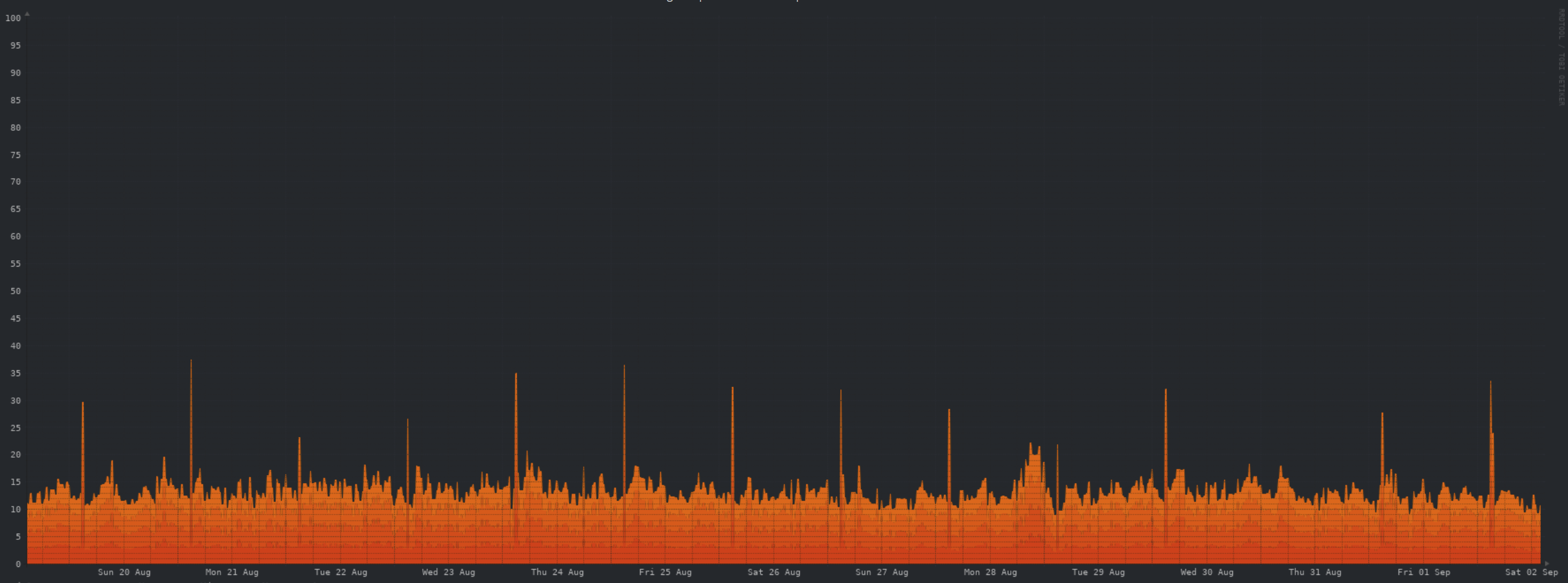

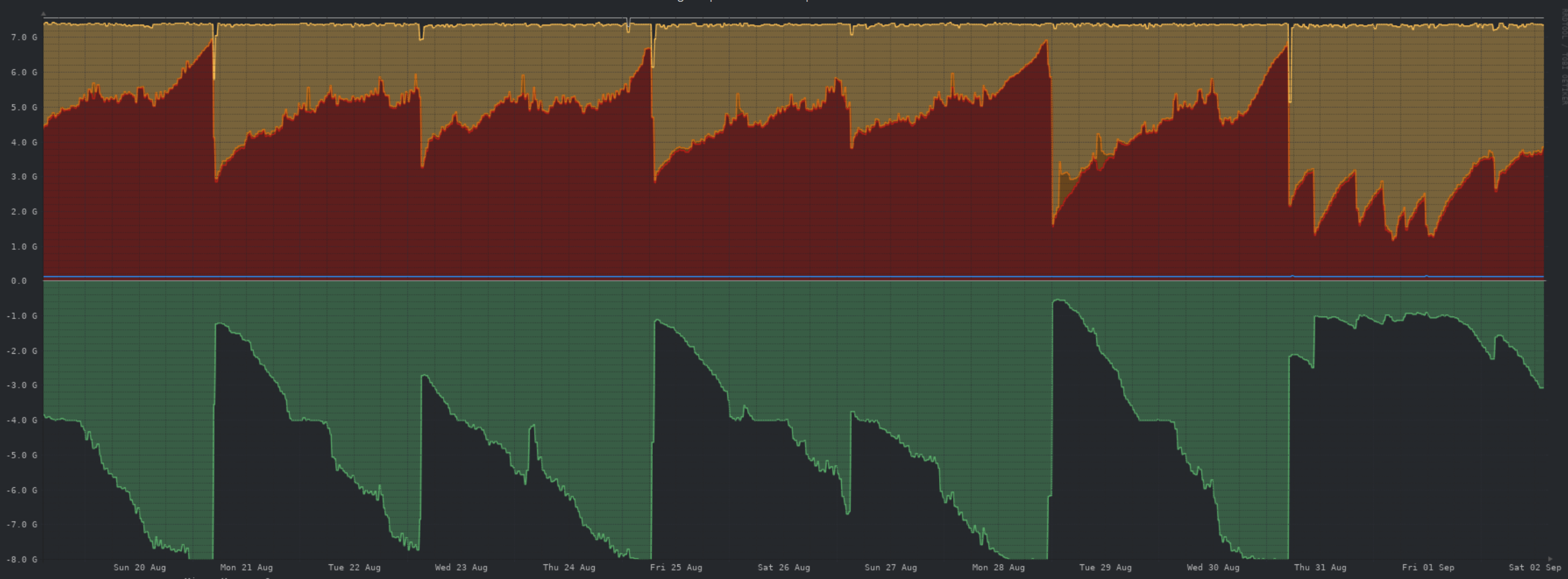

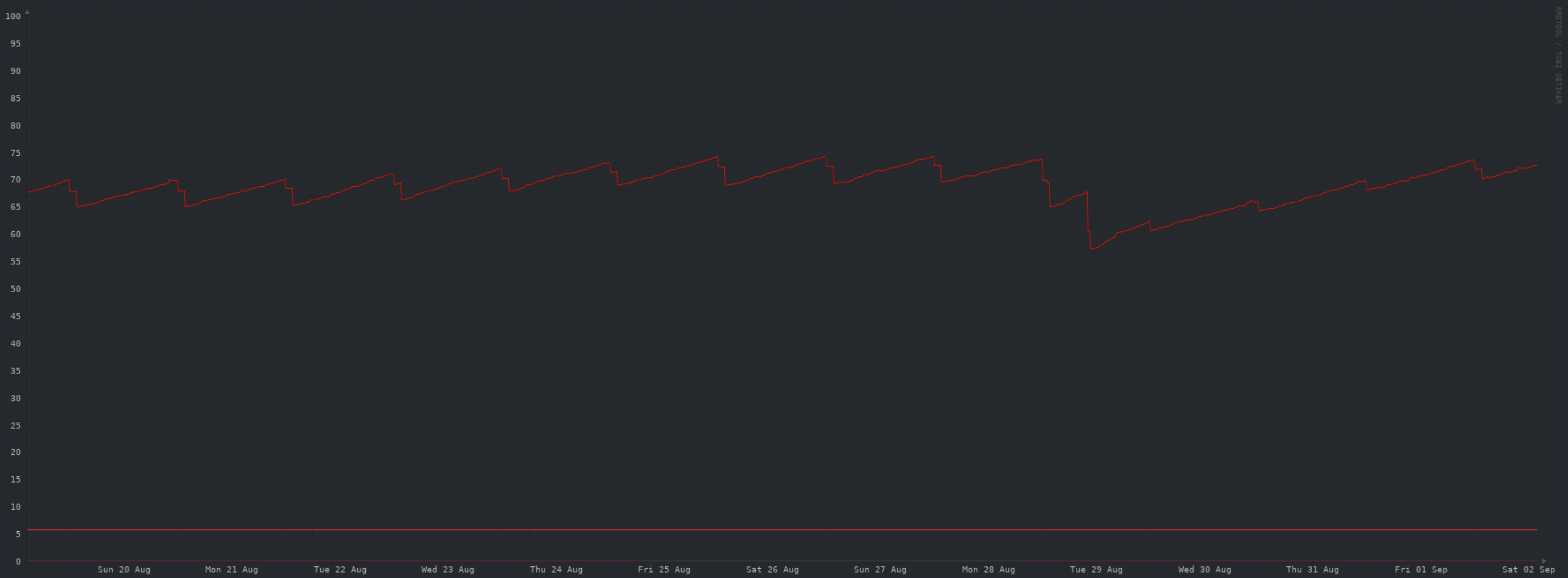

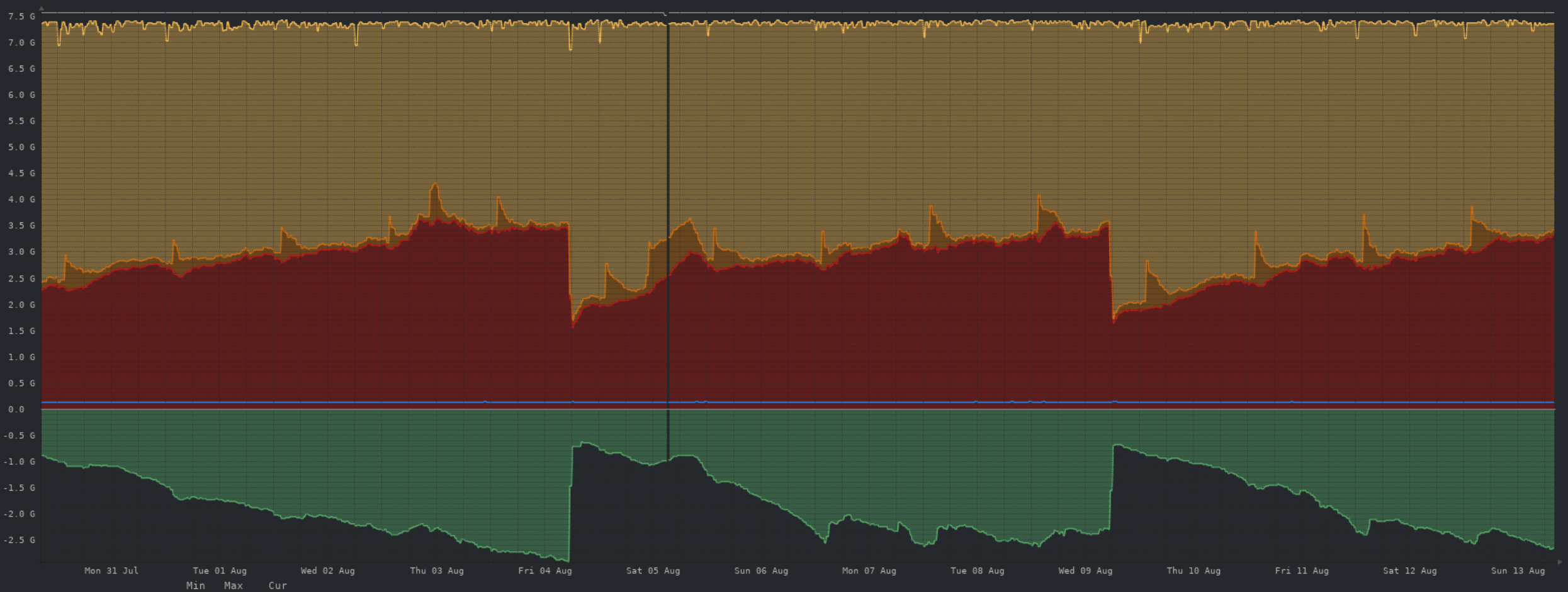

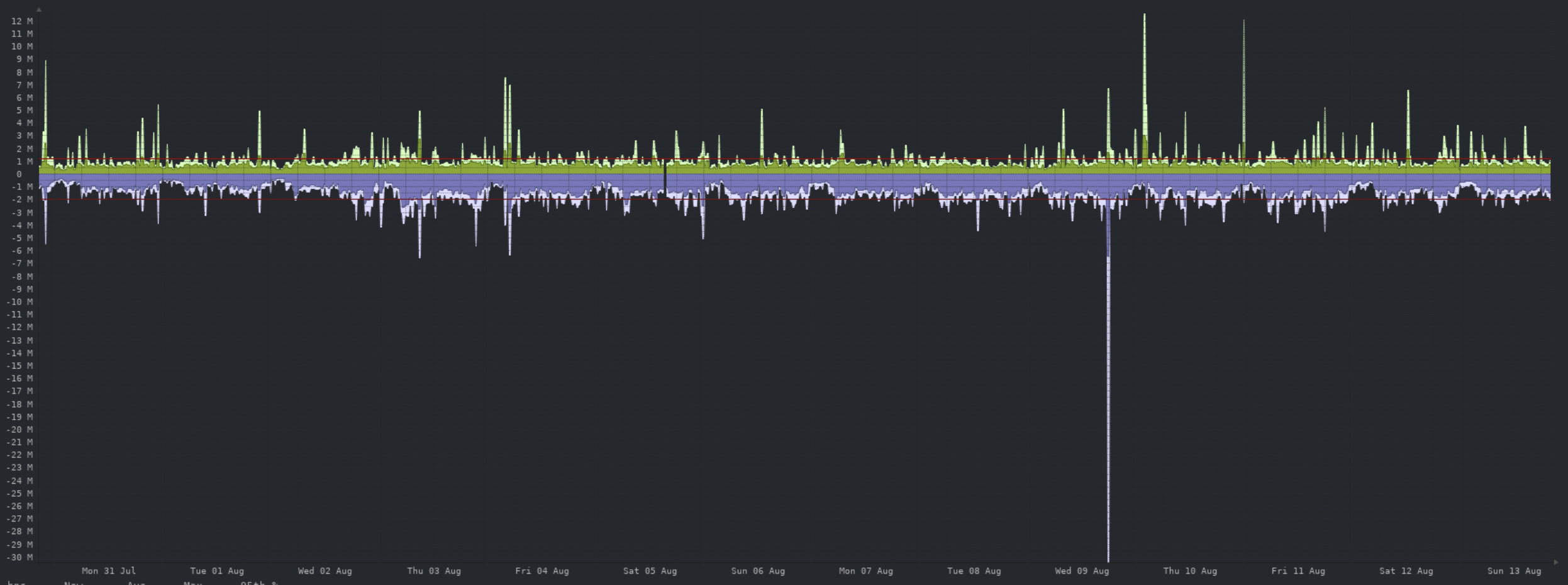

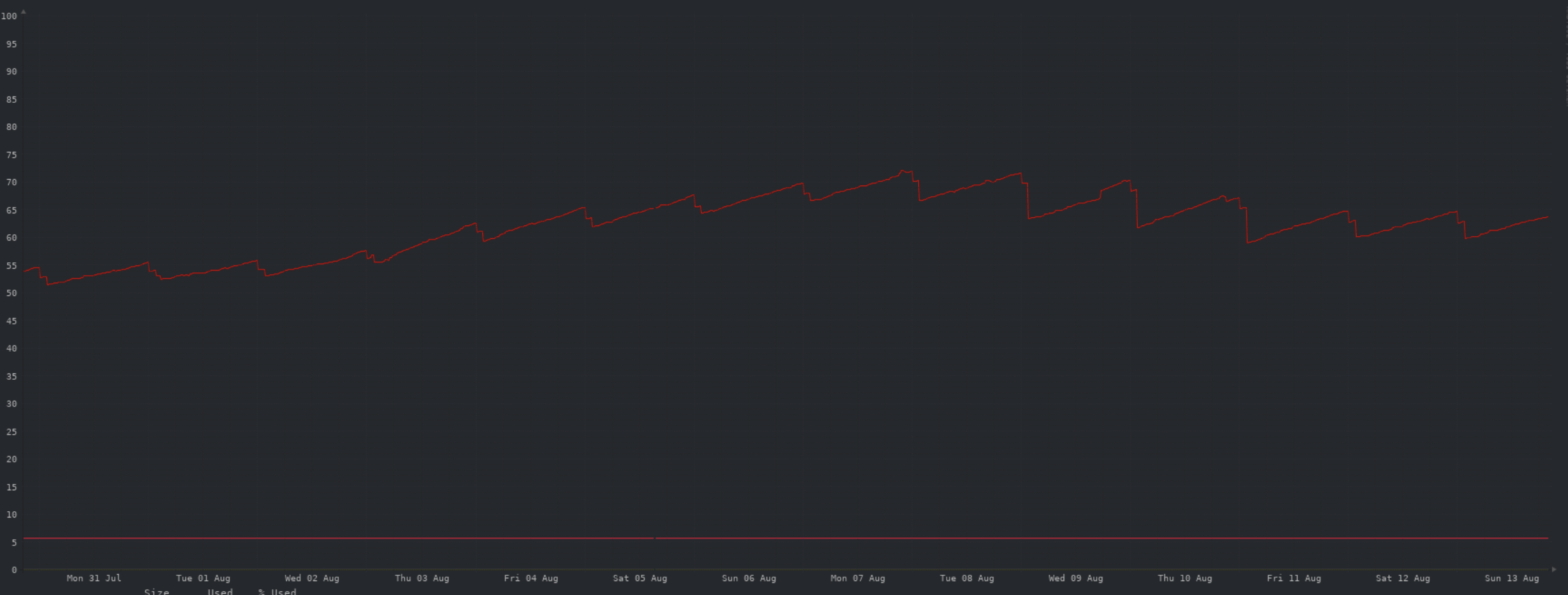

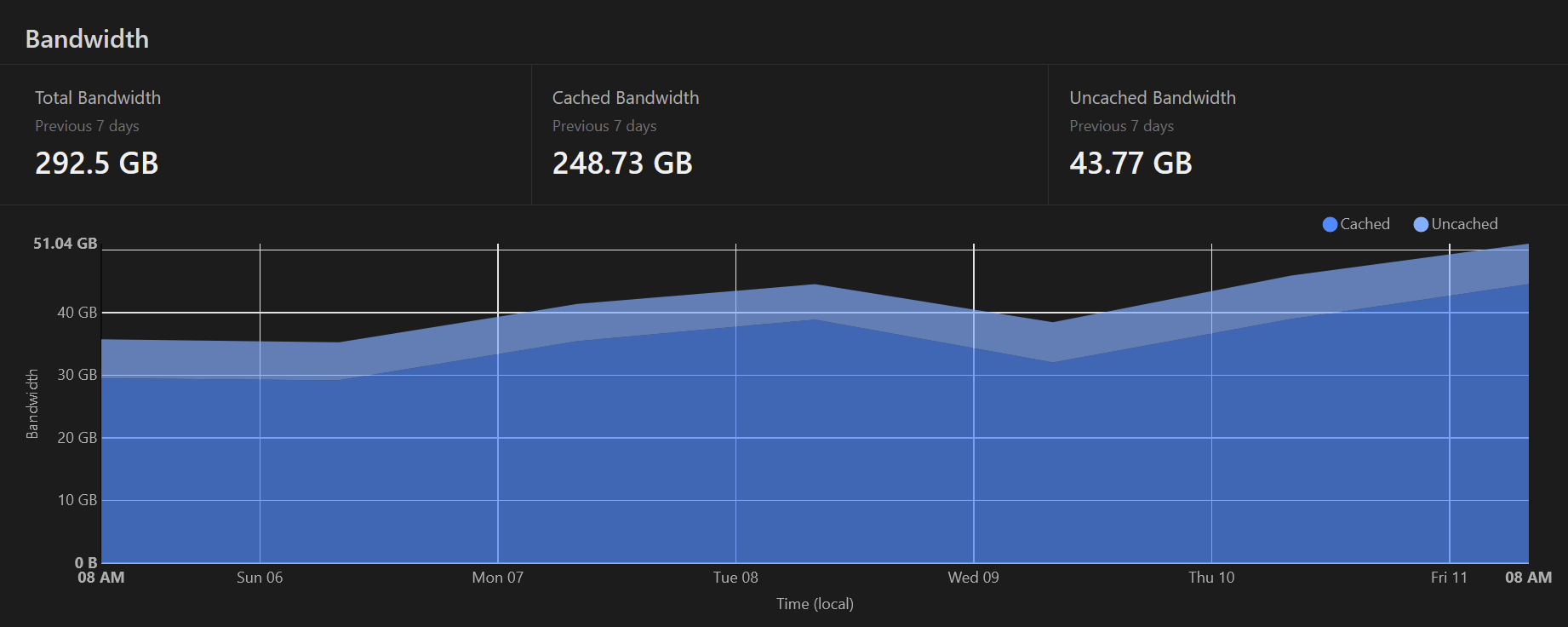

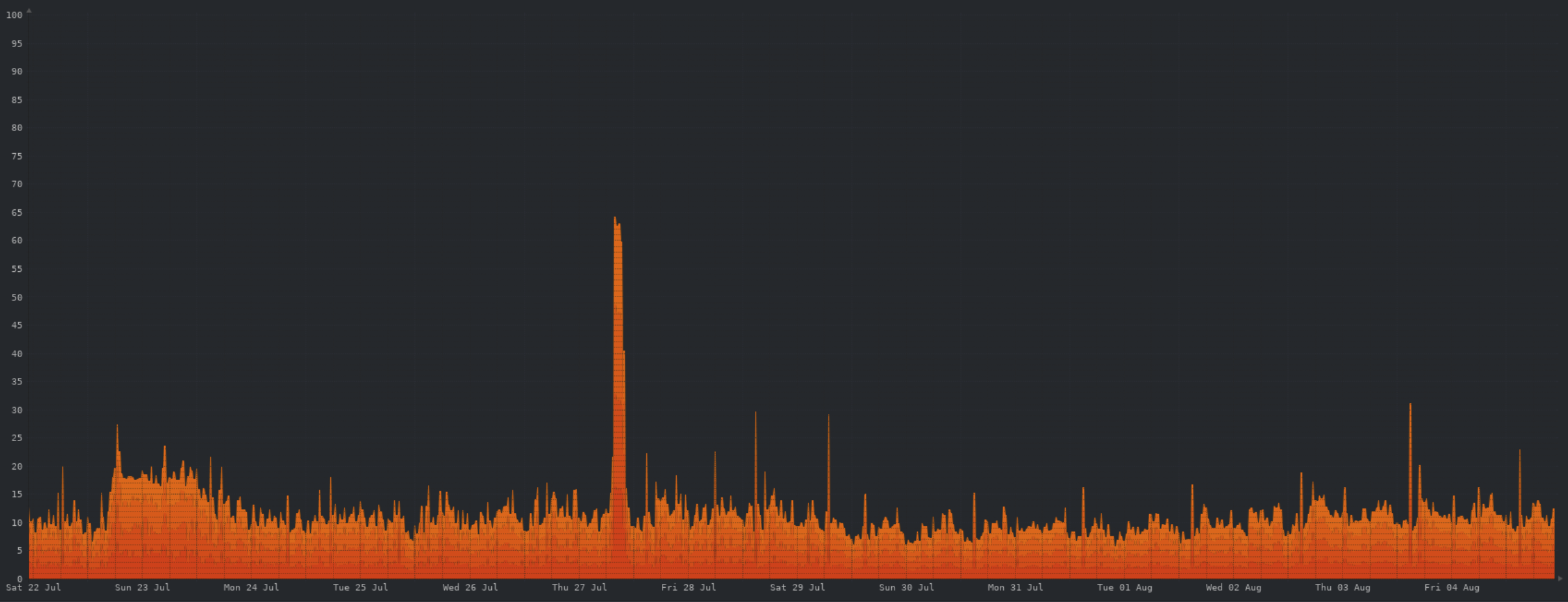

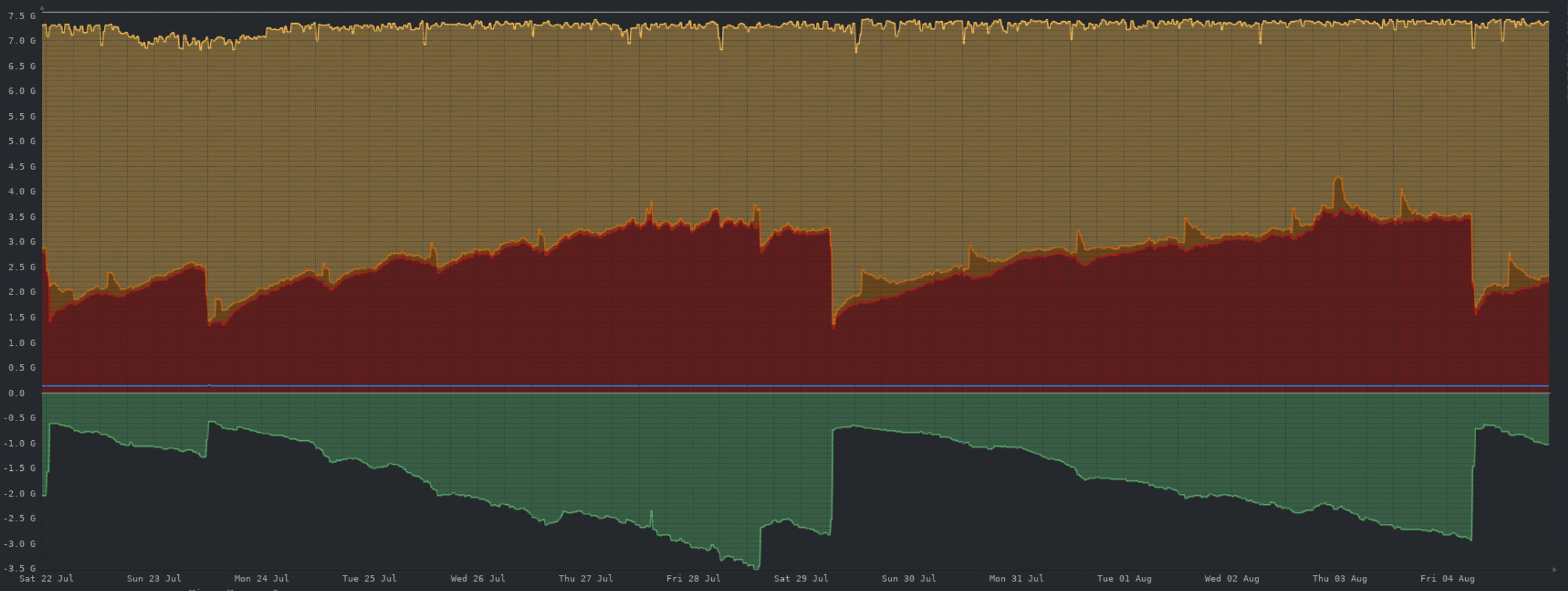

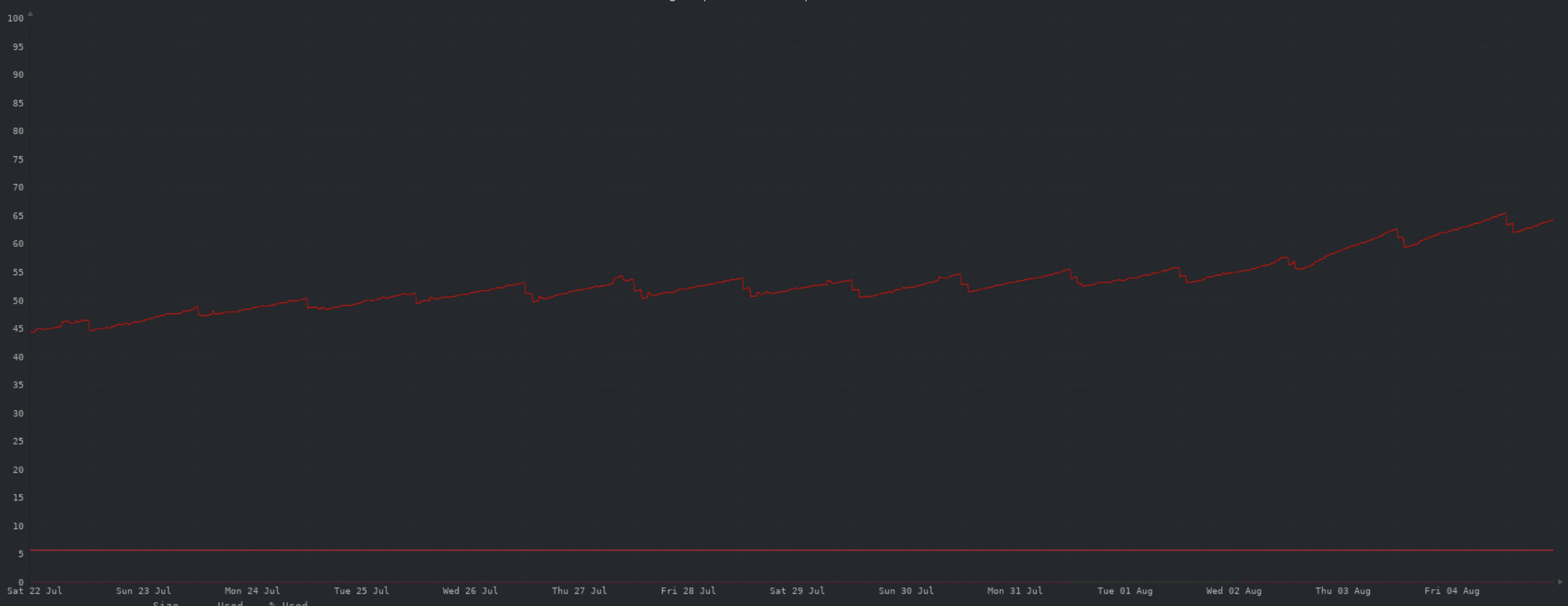

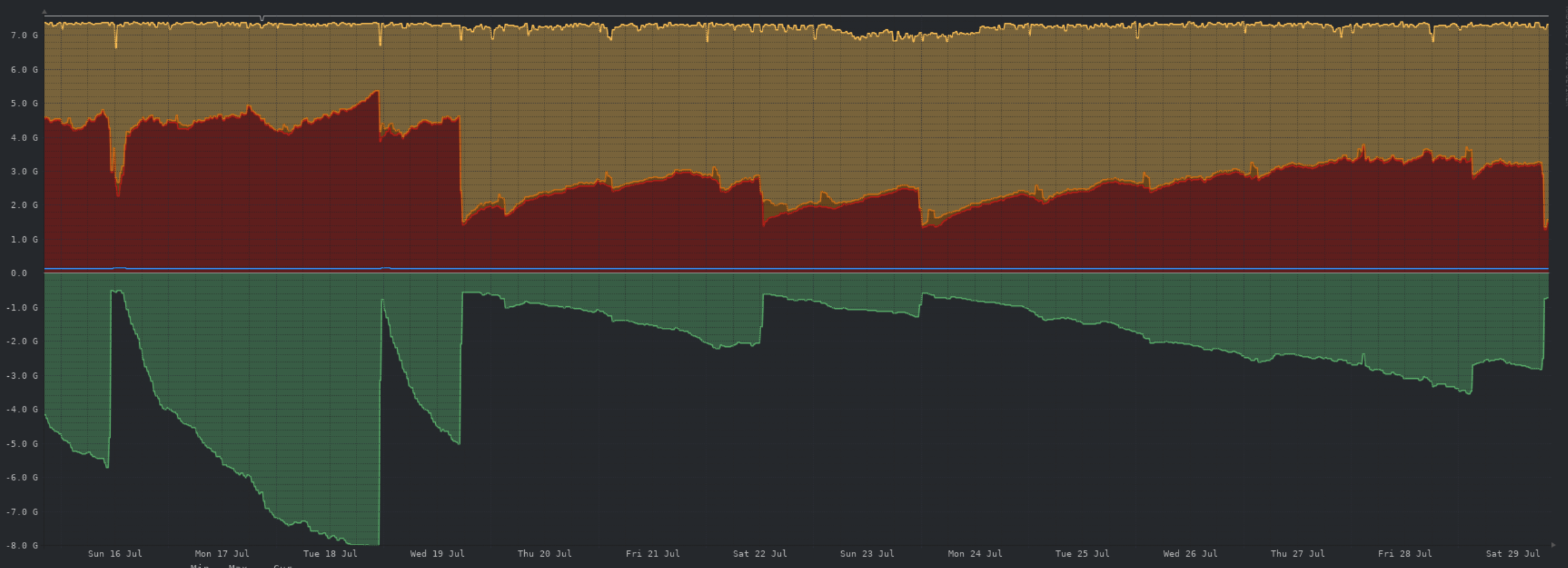

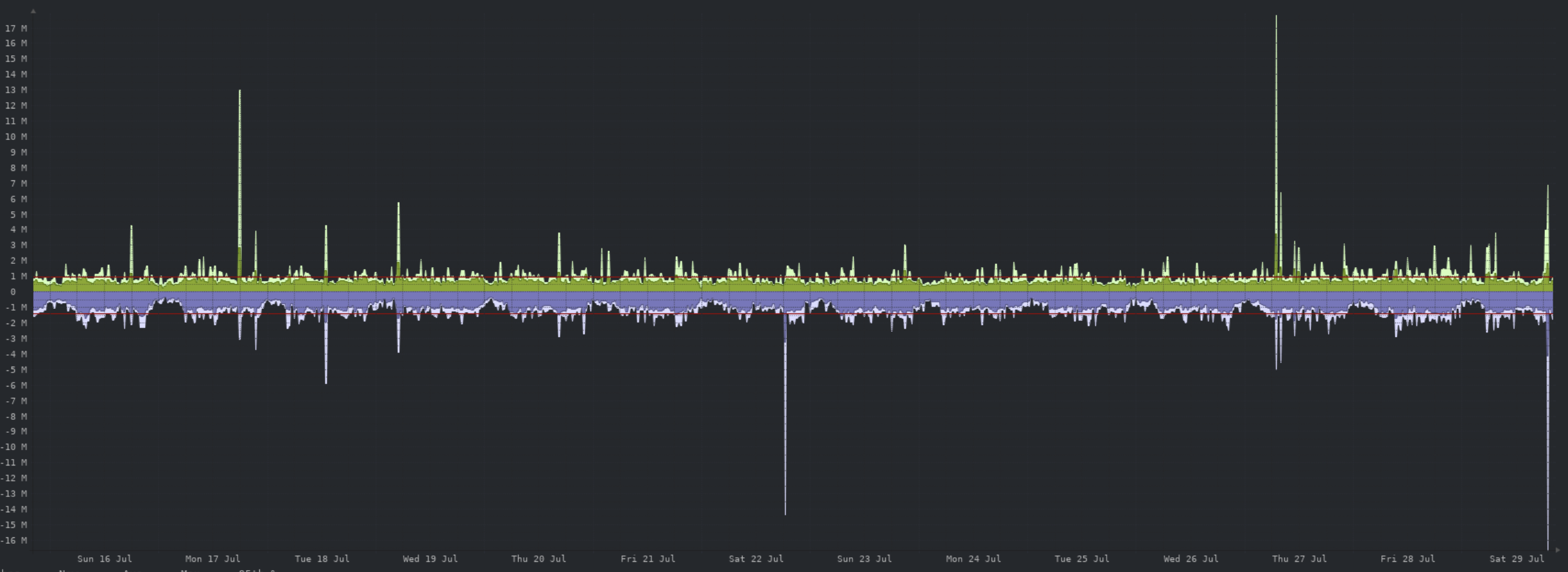

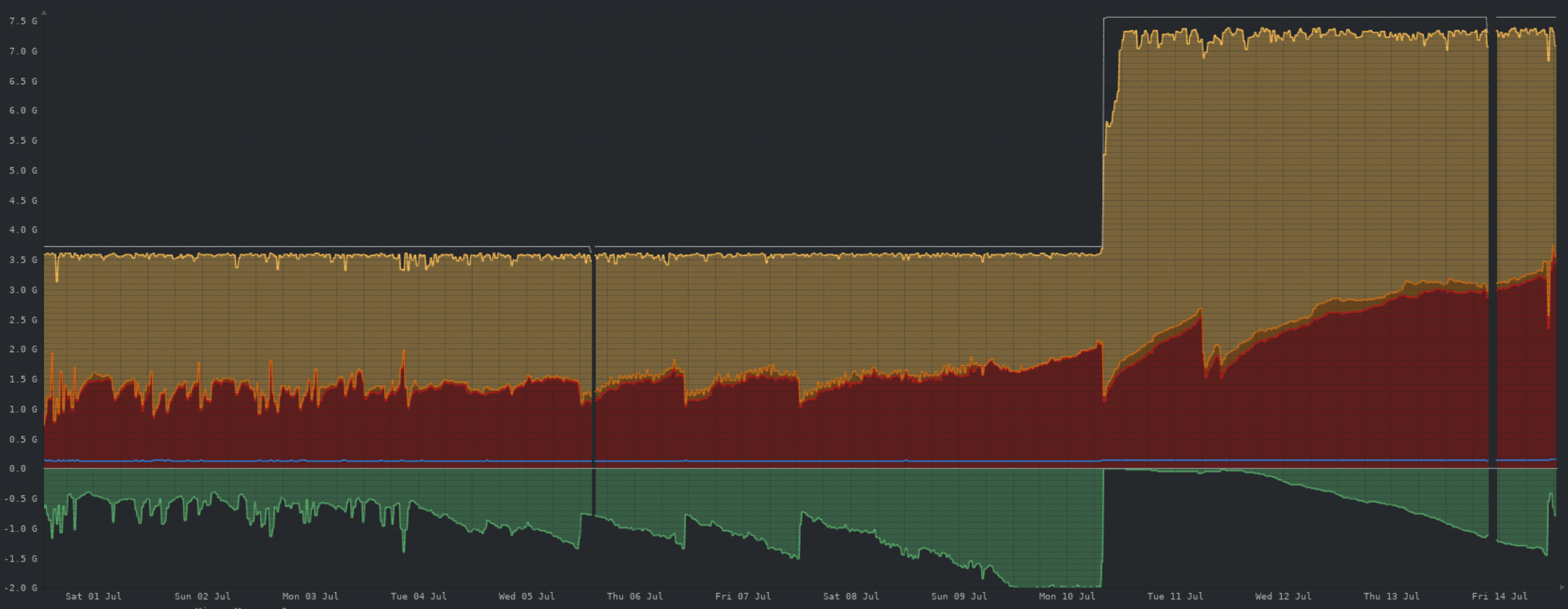

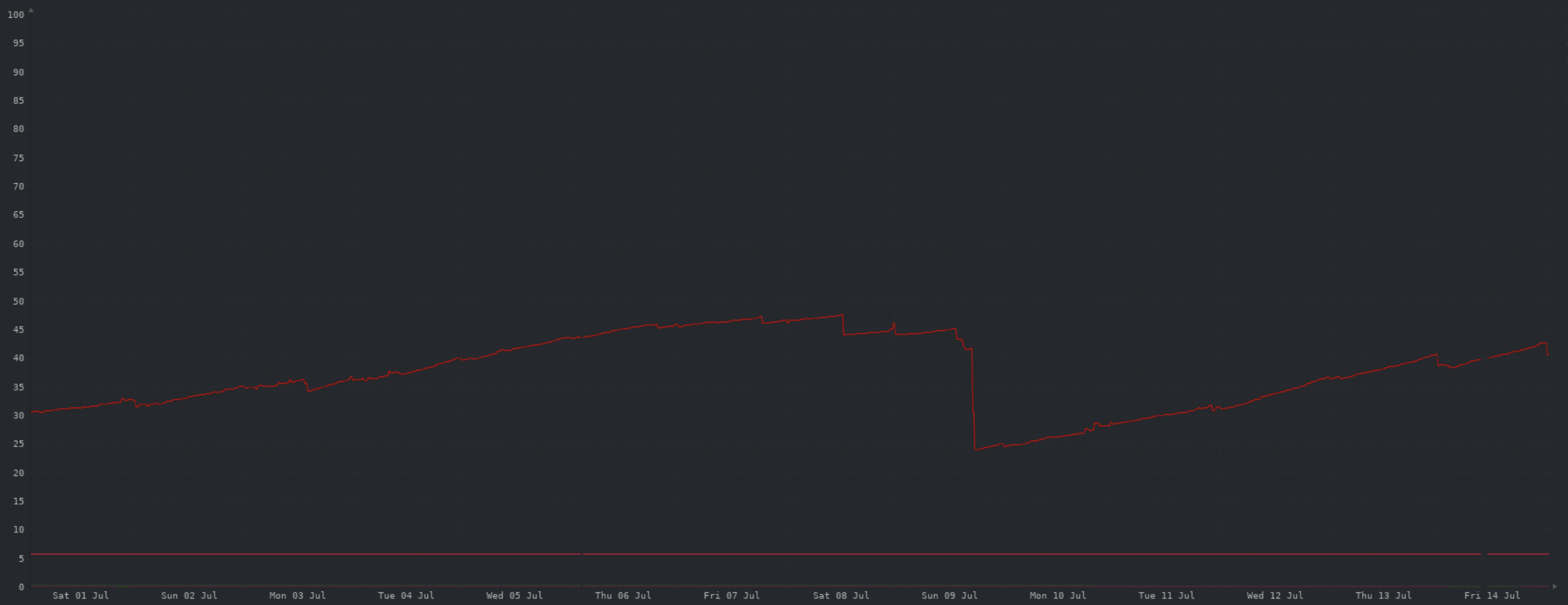

Its been a little while since I posted stuff :) CPU:  Memory:  Network:  Storage:  Cloudflare caching:  Comments: Not much has changed in quite a while. I still have a cron-job running to restart Lemmy every day due to memory leaks, hopefully this improves with future updates. Outside of that, CPU, memory and network usage are fine. Object storage usage is growing steadily, but we're a long way from paying more than the monthly minimum Wasabi fee.

The upgrade to lemmy 0.19 has introduced some issues that are being investigated.. but we currently have no fixes for: - thumbnails break after a time. Caused by memory exhaustion killing object storage processes. - messages to/from lemmy instance not yet running 0.19 are not federating. I believe it requires bugfixes by the devs. ~~I've re-enabled 2 hourly lemmy restarts. Hopefully this will help with both issues, though it will result in a disruption to the site around every couple of hours. When the hourly restarts are disabled I'll unpin this post. As any other issues are identified I'll post them here too. ~~ Update: I've disabled the 2 hourly restart after upgrading to 0.19.2... lets see how this goes... Update2: no issues seen since the upgrade, looks to have resolved both the memory leak and the federation issues. Hooray :)

test

~~I'll be restarting AZ today for an update to lemmy 0.19.~~ Upgrade complete. This is a major upgrade, so I expect there to be some issues. Strap in, enjoy the ride. Expect: - further restarts - bugs - slowdowns - logouts - 2FA being disabled - possibly issues with images, upgrading pictrs to 0.4 at the same time

Hi all, Looks like I'll be visiting Melbourne in winter 2024 for a wedding with my family. Any must-see tourist attractions you can suggest? We'll definitely be hitting up Melbourne zoo, and maybe Werribee. Thinking I'll have to hire a car.. though I'd prefer to stay central and use public transport and Uber around when required.

I'm kicking off a storage upgrade on the server, expect it to proceed in the next 15 minutes and be back online shortly after.

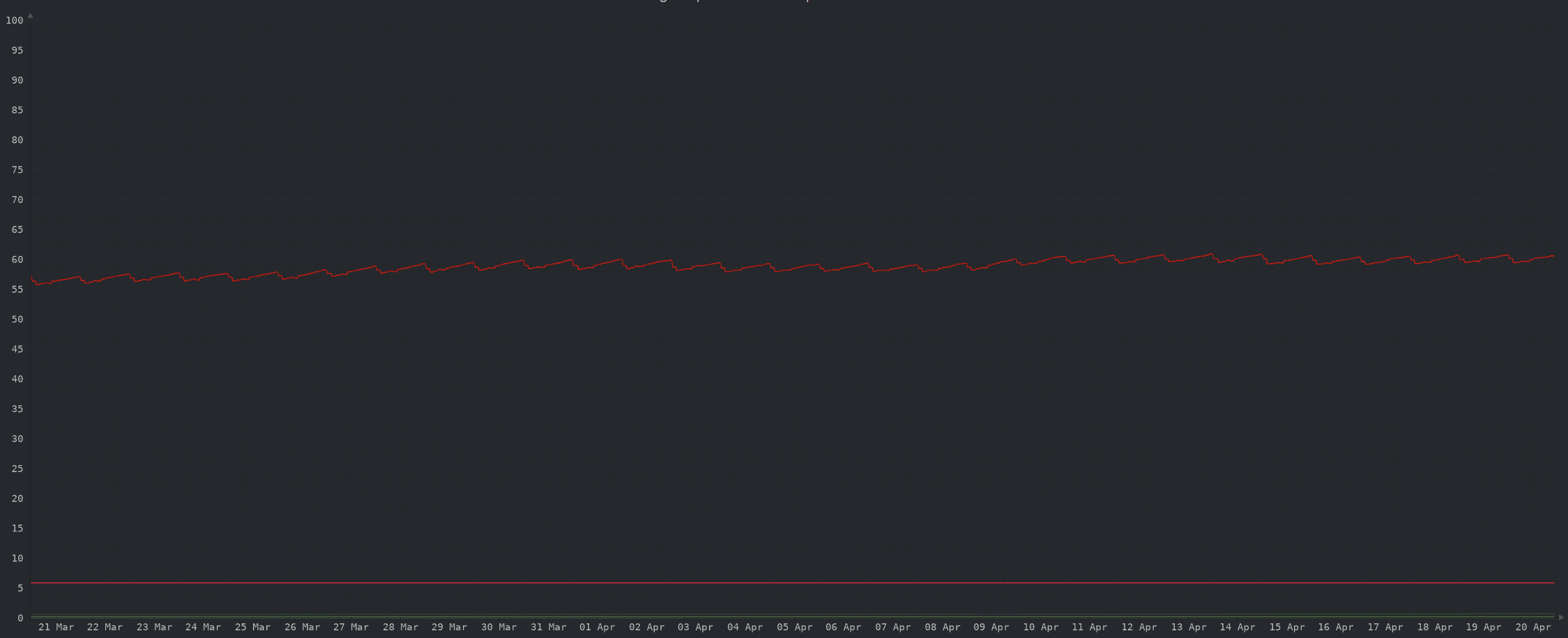

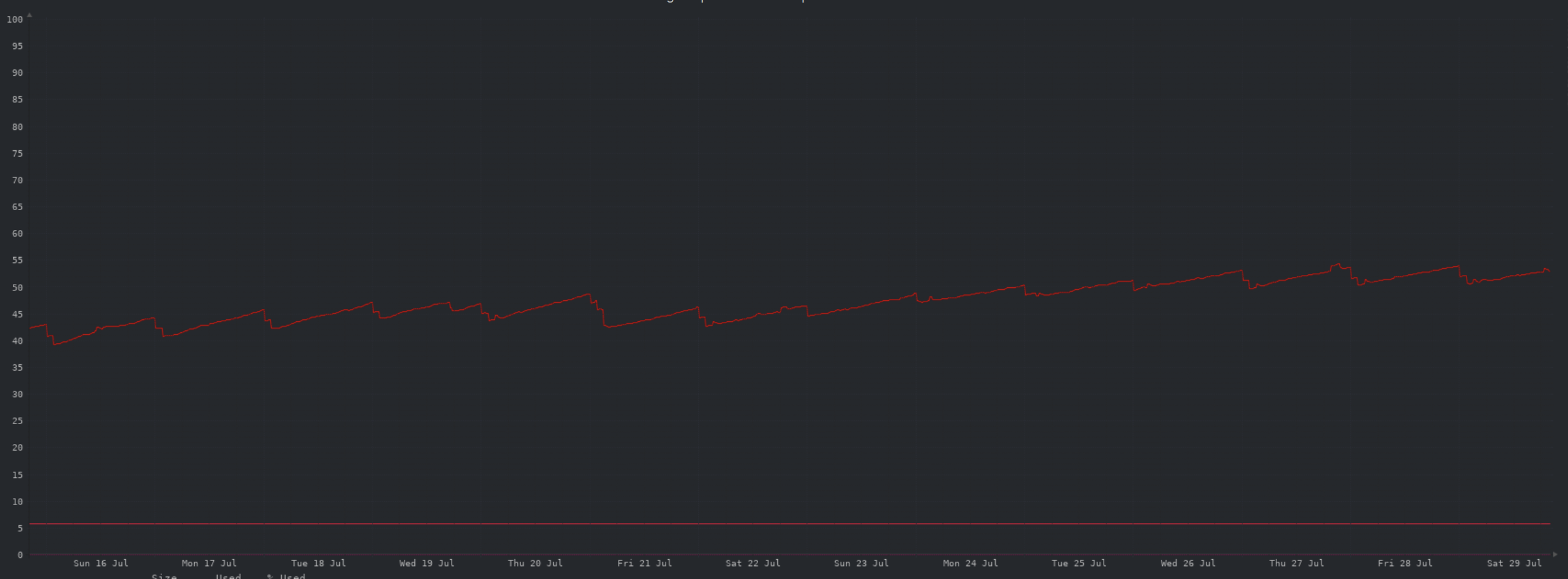

Here we are, another month down.. still kicking 😀 As usual I’m stating full dollar figures for simplicity, but they’re all rounded/approximate to the dollar. I’m not an accountant and its close enough for our purposes. ##### Income $98 AUD thanks to 13 generous donors ##### Expenses $45 OVH server fees $10 ($~6 USD)Wasabi object storage $39 ($25 USD) Domain registration extended to 6th August 2025 = $94 ##### Balance +$486 carried forward from [July](https://aussie.zone/post/872589) +$98 income for August -$94 expenses paid in August = $490 current balance ##### Future Baseline storage on the server is now at ~70% at the low point of the day, time permitting I'll upgrade shortly to ensure sufficient room for growth. Doubling the current storage will cost an additional ~$12 per month. #### THANK YOU to everyone that has contributed to the running costs of the site. A very special thank you to [@Nath@aussie.zone](https://aussie.zone/u/Nath) for everything he's doing here, both in public and behind the scenes. Without his help performing admin and moderator activities, this place would be a shambles. I've simply been too busy professionally and personally to pay as much attention as I need to. Thanks Nath, I (and I'm sure everyone else here) appreciate it 🤗 As always, if you have any questions please ask.

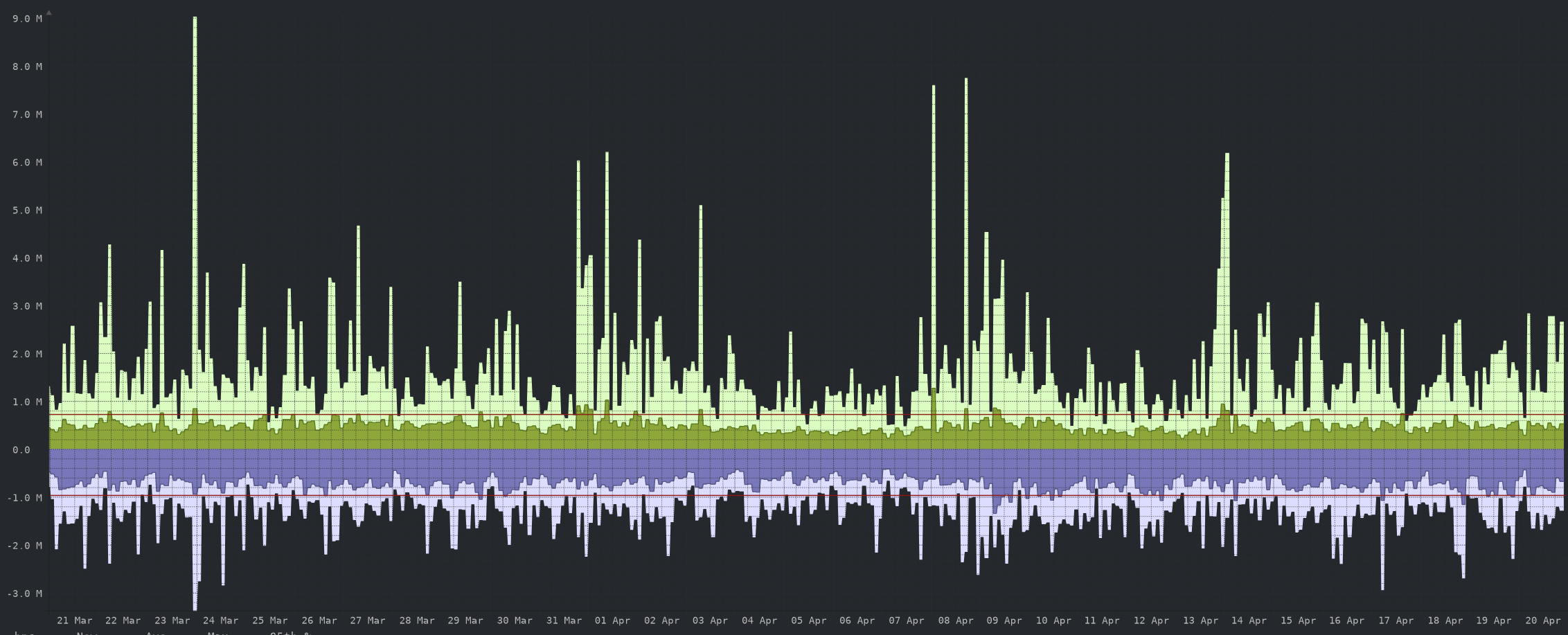

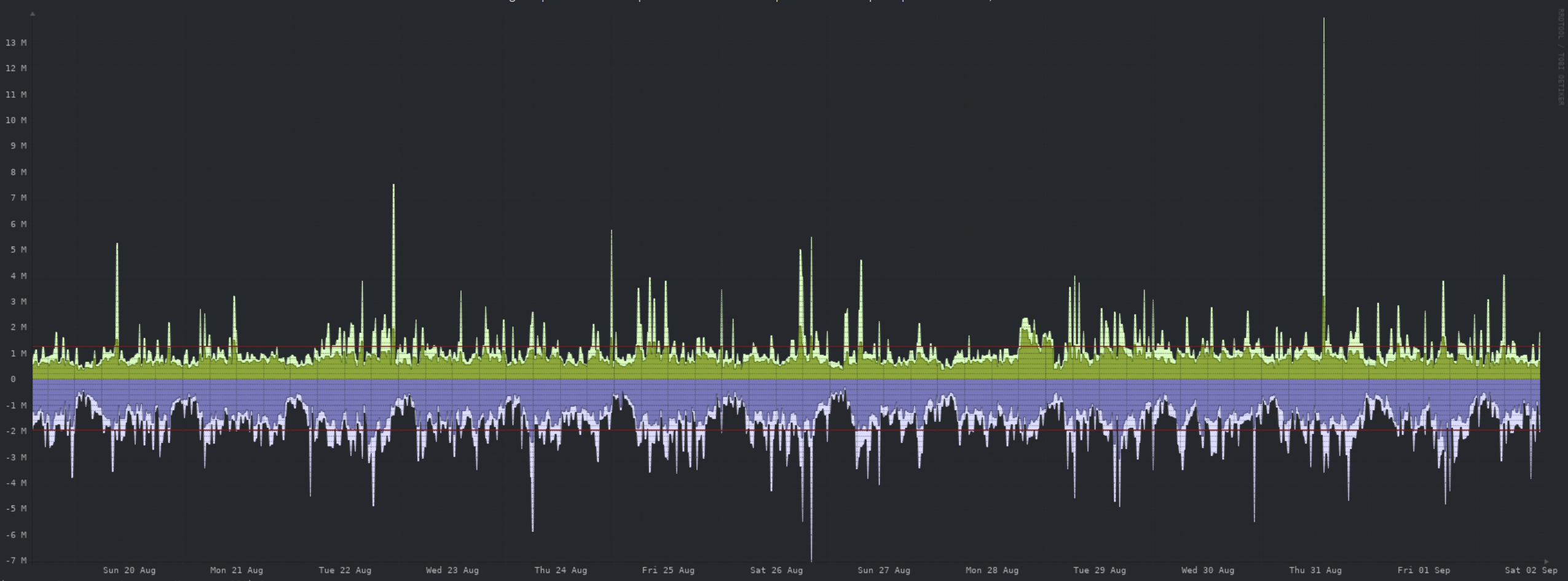

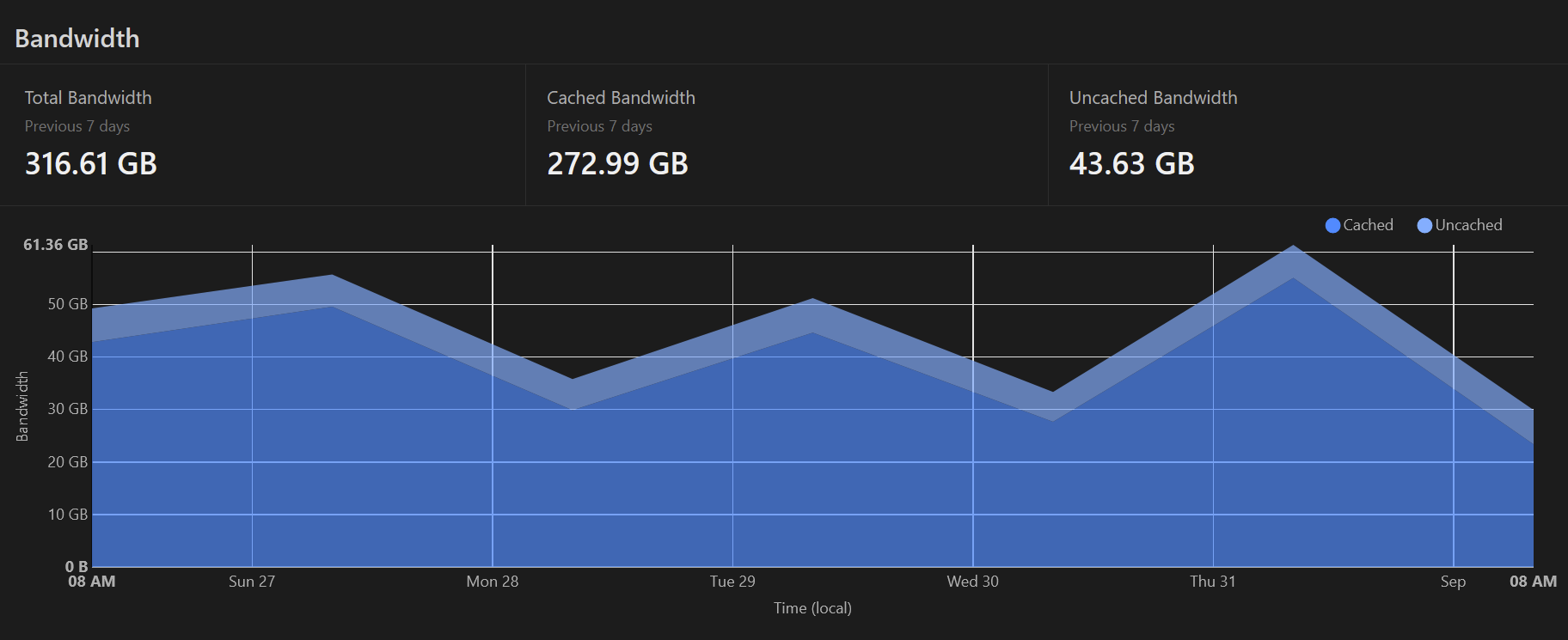

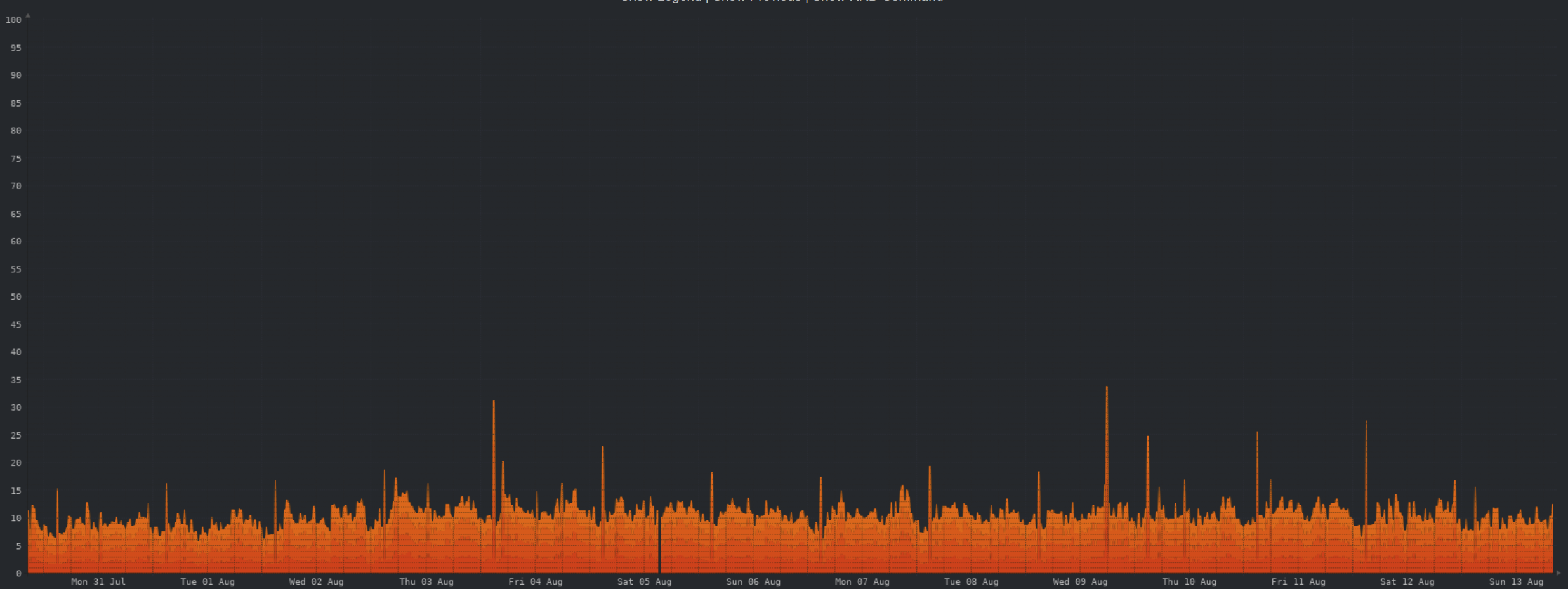

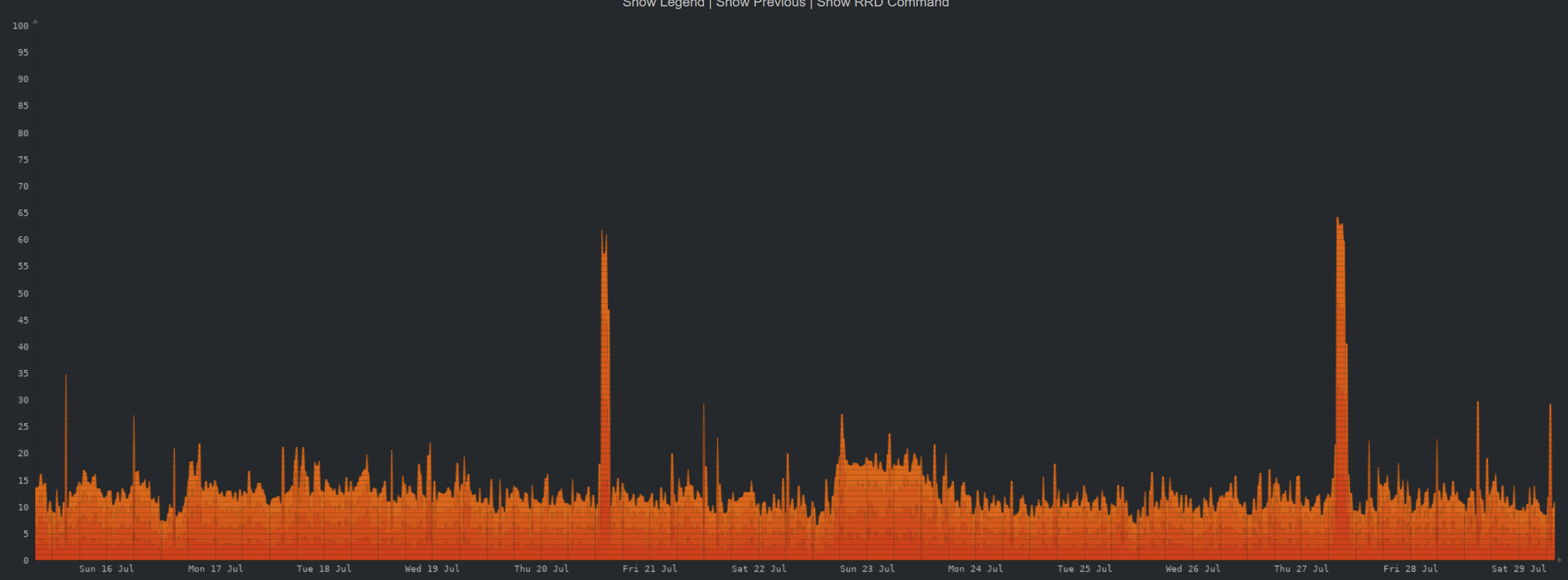

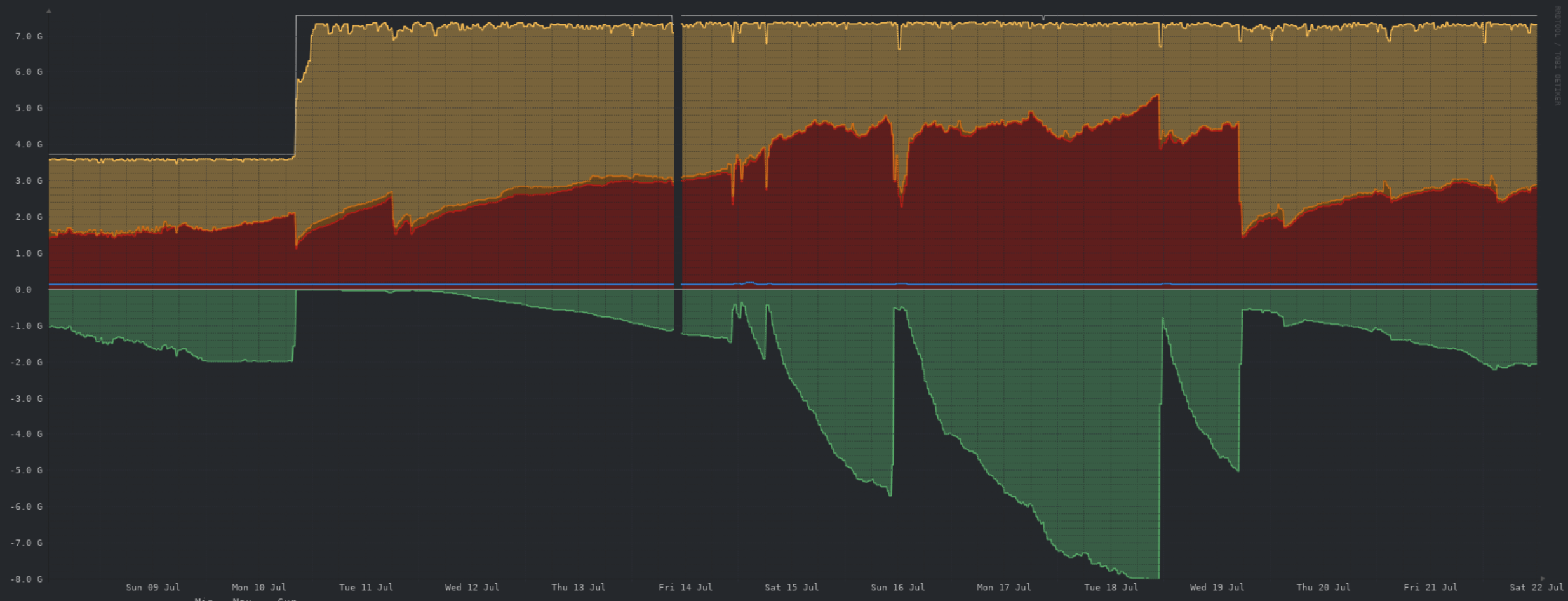

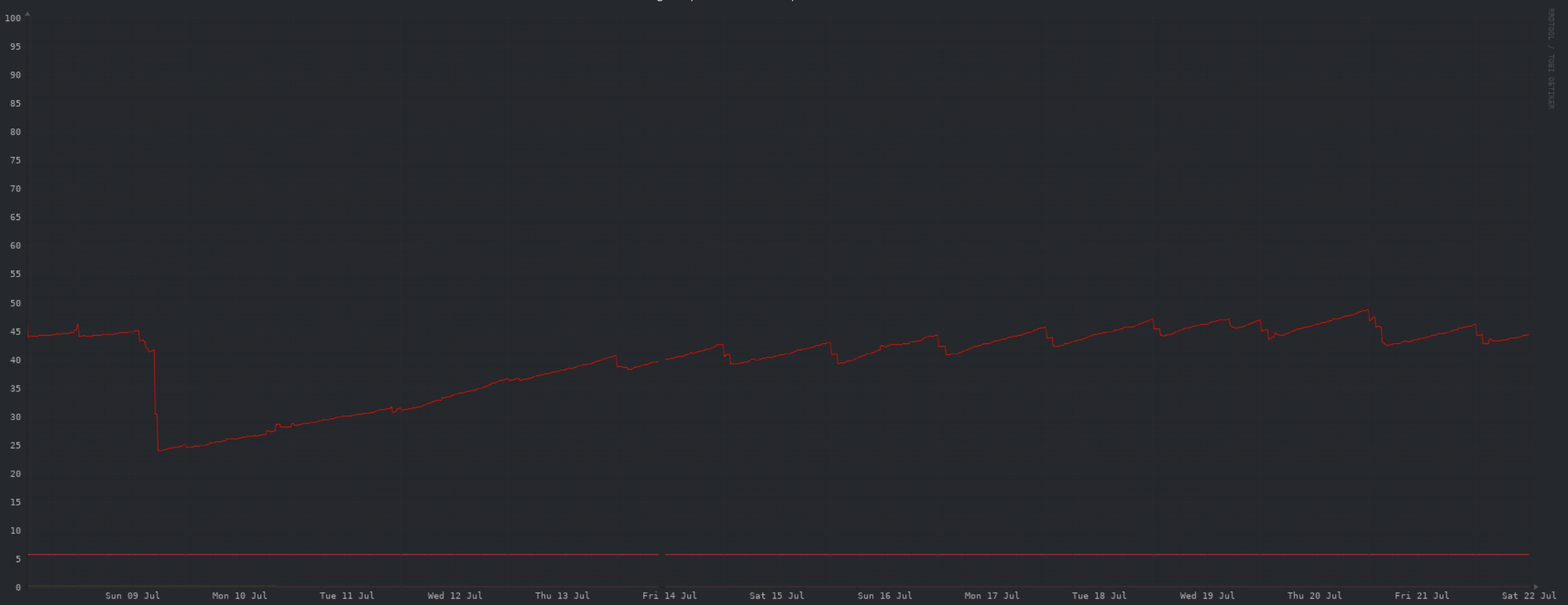

##### CPU:  ##### Memory:  ##### Network:  ##### Storage:  ##### Cloudflare caching:  ##### Summary: Not much to call out. The storage drop was due to purging a days worth of images, and clearing the entire object storage cache. When I have time, I'll upgrade the VPS to add storage.

Due to some [disgusting](https://jamie.moe/post/113630) behaviour, I've kicked off the process of deleting ALL images uploaded in the last day. You will likely see broken images etc on aussie.zone for posts/comments during this period of time. Apologies for the inconvenience, but this is pretty much the nightmare scenario for an instance. I'd rather nuke all images ever than to host such content.

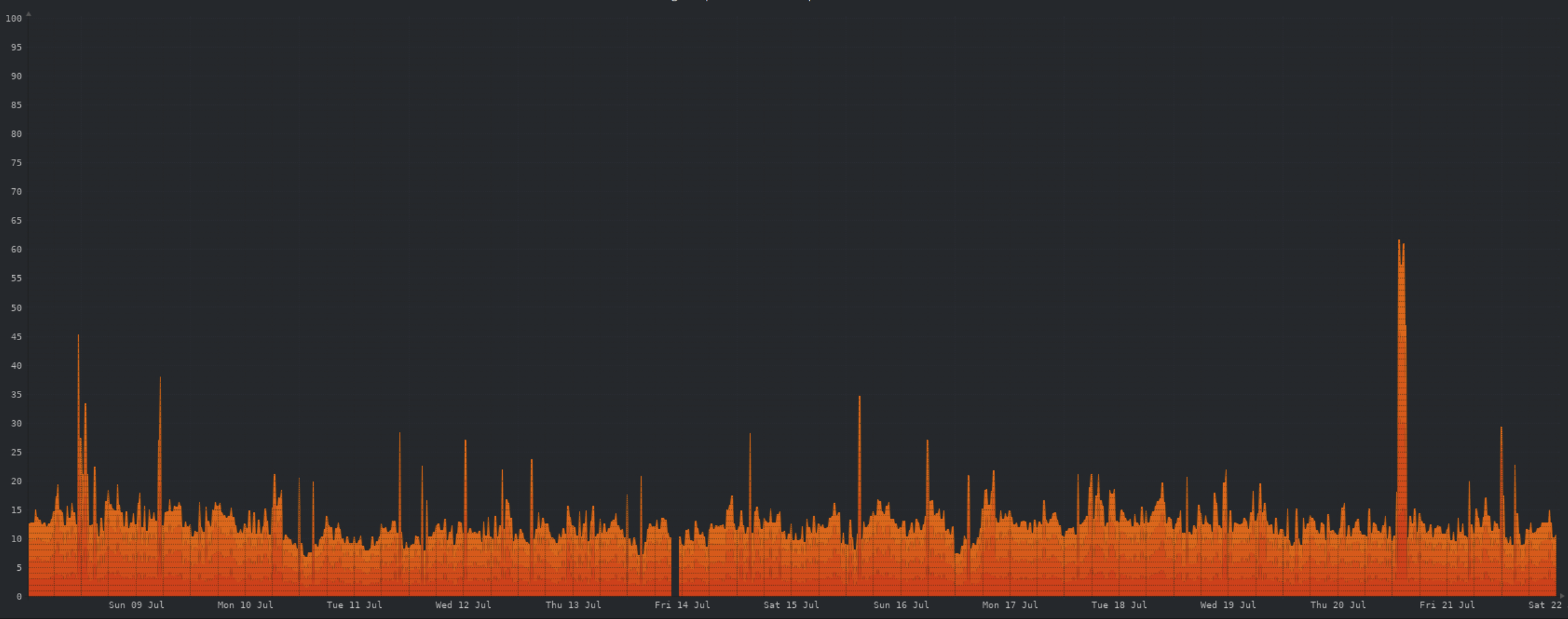

##### CPU:  ##### Memory:  ##### Network:  The one spike here is from a DB backup being uploaded to object storage, prior to the upgrade to 0.18.4. ##### Storage:  Still ok here. You can see the daily minimum free space increase as I tweak the local cache for object storage. ##### Cloudflare caching:  ##### Summary: Nothing of note here. Once baseline storage hits ~70% and I decreasing the object storage retention period is no longer worthwhile, I'll upgrade the server for more storage.

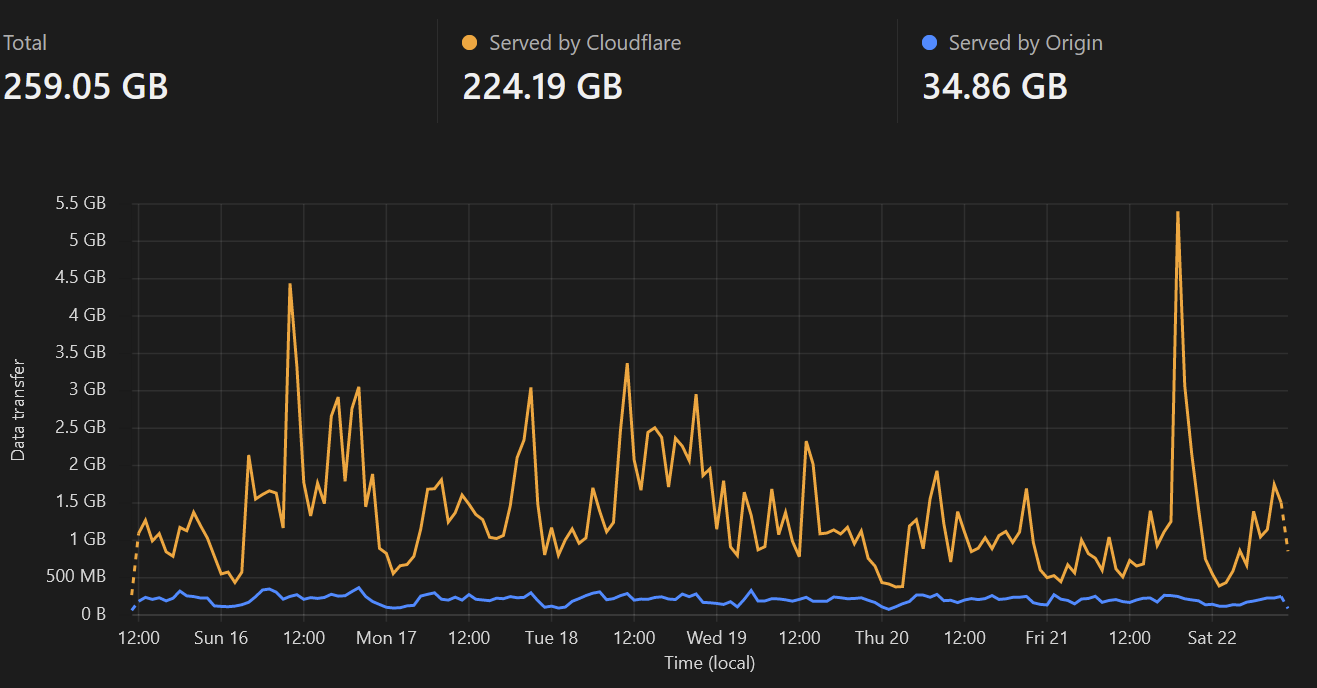

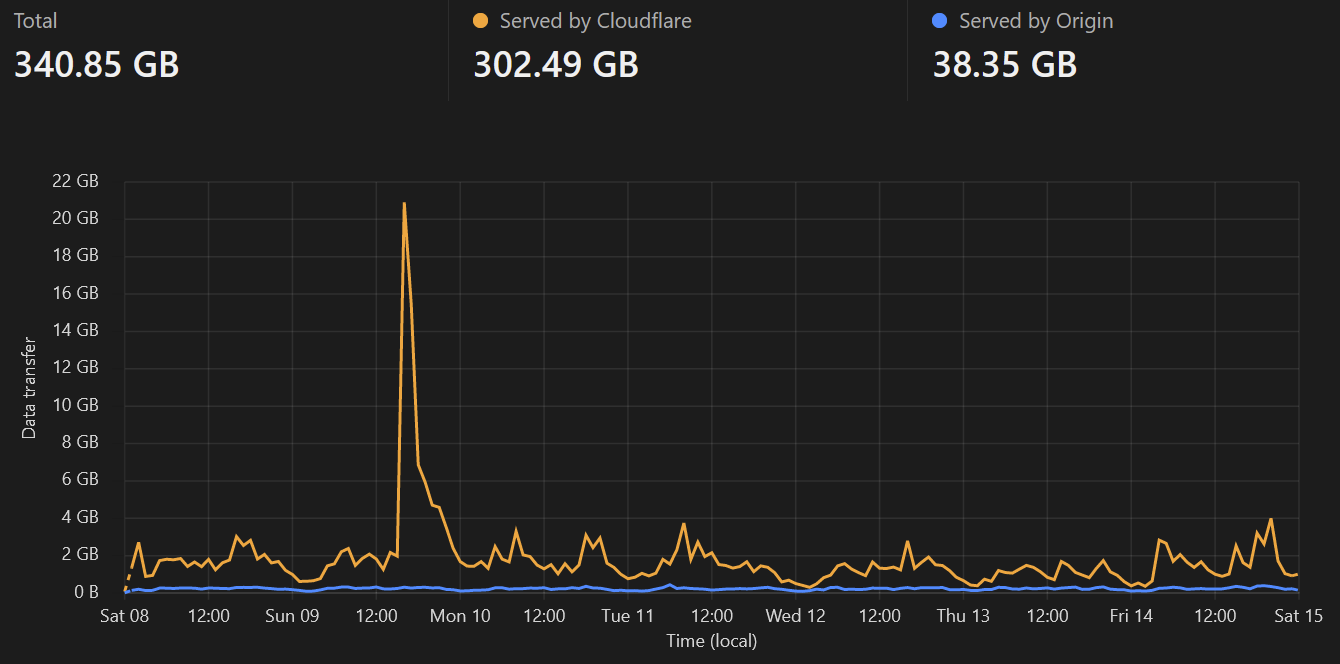

Sorry this is a little late, I've been busy with real life this week. I'm stating full dollar figures for simplicity, but they're all rounded/approximate to the dollar. I'm not an accountant and its close enough for our purposes. ##### Income $267 AUD thanks to 25 generous donors, it is very much appreciated. ##### Expenses $34 OVH server fees. Lower than normal, as OVH seem to switch services to calendar based rather than anniversary based from the second month. The July billing period covered 8 July - 31 July, August will be 1-31. $40 Cloudflare. Due to huge volumes of egress traffic from Cloudflare in early July, I opted to pay for a month of premium in order to investigate. The cause was found and remediated. I've since dropped back to the free plan. ##### Balance $193 surplus from July (Income - Expenses) $293 carried forward from [June ](https://aussie.zone/post/243913) = $486 current balance ##### Future Wasabi's trial period expired on the 8th of July, so the first bill is due this month. Domain registration, I'll be looking to extend by another year and keep it so that we always have at least 12 months paid up. Server storage, I expect we'll need to upgrade for additional storage this month. With this upgrade I'll consider pre-paying/committing to the server for a longer period of time. This provides both a discount, and certainty to everyone that the money is put to good use. #### THANK YOU ..again, to our generous donors. The offer of an @aussie.zone email address redirect is still open to any donors. As always, if you have any questions please ask.

##### CPU:  ##### Memory:  ##### Network:  ##### Storage:  ##### Cloudflare caching:  ##### Summary: My only call out this week is an uptick in storage consumption, seems to align with an increase in new user signups and general higher activity. I'm guessing this is due to the release of Sync for Lemmy.

##### CPU:  ##### Memory:  ##### Network:  ##### Storage:  ##### Cloudflare caching:  ##### Summary: All resource usage looking stable. Storage is the only one that is trending up, as expected.

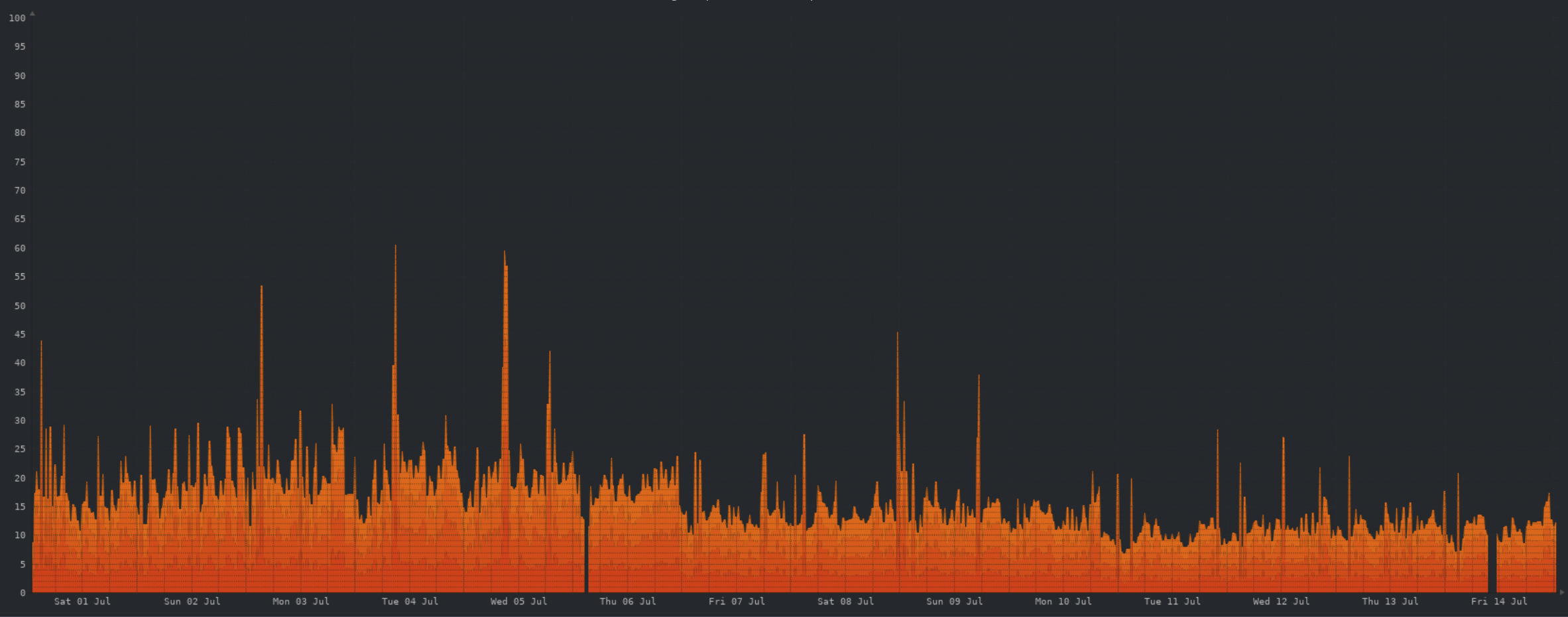

Another week... another bunch of nerd graphs! ##### CPU:  Not much to say here, pretty stable CPU usage wise. ##### Memory:  The unusual memory growth appears to have been related to a minor Postgres configuration change I made last week, which was reverted on Thursday. Memory usage looking much more normal since. ##### Network:  As with CPU usage, network traffic is looking stable. ##### Storage:  Storage growth has normalized, now that we've hit an equilibrium point. Though I'll be tweaking the object storage cache retention to minimise object storage pulls. ##### Cloudflare caching:  Still saving us a large volume of egress traffic. Will save even more if particular content goes viral. ##### Summary: Resource utilisation on the server is looking great across the board. No skyrocketing usage as we saw initially. Storage is still looking like the first trigger for another server upgrade, but as it is now a gradual increase we'll have plenty of fore warning and its looking like this will be some time away. Questions? 🤓

Any chance we could get a setting to toggle the display of avatars in comments?

Doh! Forgot earlier in the night, so here you are... technically Saturday. ##### CPU:  The lemmy devs have made some major strides in improving performance recently, as you can see by the overall reduced CPU load. ##### Memory:  I need to figure out why swap is continuing to be used, when there is cache/buffer available to be used. But as you can see, the upgrade to 8GB of RAM is being put to good use. ##### Network:  The two large spikes here are from some backups being uploaded to object storage. Apart from that, traffic levels are fine. ##### Storage:  A HUGE win here this week, turns out a huge portion of the database is data we don't need, and can be safely deleted pretty much any time. The large drop in storage on the 9th was from me manually deleting all but the most recent ~100k rows in the guilty table. Devs are aware of this issue, and are [actively working](https://github.com/LemmyNet/lemmy/pull/3583) on making DB storage more efficient. While a better fix is being worked on, I have a cronjob running every hour to delete all but the most recent 200k rows. ##### Cloudflare caching:  Cloudflare still saving us substantial egress traffic from the VPS, though no 14MB "icons" being grabbed thousands of times this week 😀 ##### Summary: All things considered, we're in a much better place today than a week ago. Storage is much less of a concern, and all other server resources are doing well... though I need to investigate swap usage. Longer term it still looks as though storage will become the trigger for further upgrades. However storage growth will be much more slow and under our control. The recent upward trend is predominantly from locally cached images from object storage, which can be deleted at any time as required. As usual, feel free to ask questions.